A refrain that I repeat over and over is that many things can be viewed as a prediction game and that better prediction is just better compression. And when someone says something is unpredictable they actually mean this data is highly incompressible - maybe the data generating function is of high kolmogorov complexity or comes from a treacherous search space.

So for example I can tell you that in their core essence, there is not much difference between a scientist and gzip or winzip (actually the best comparison would be 7zip as I explain later). A scientist is trying to make sense of data by creating a simpler description that makes predictions - i.e. compression.

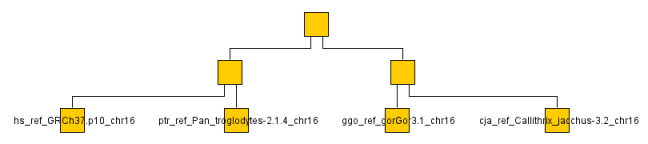

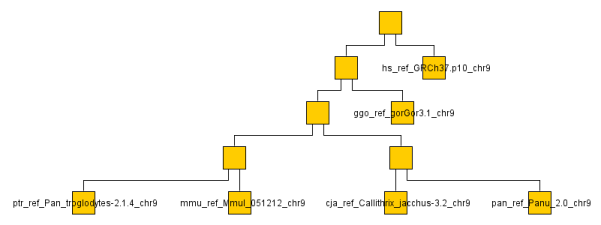

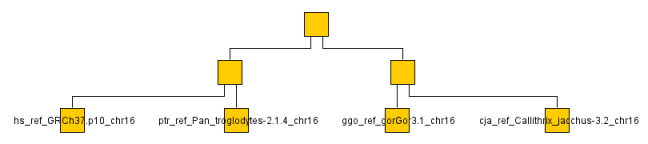

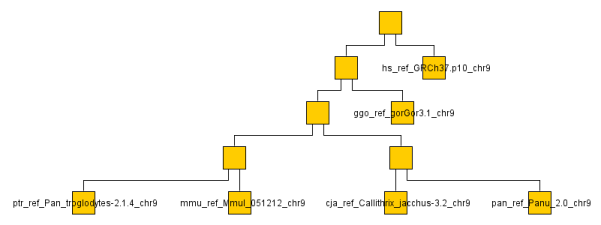

This can be taken literally by doing actual science using something like 7-zip. Using a very simple technique called normalized compression distance - a measure of the similarity between two bit strings by approximating their normalized information distance, itself incomputable since it is based on the Kolmogorov complexity function. For example, one can compare and cluster genomes into phylogenetic trees using 7zip.

To do so I first used the PPMD algo from the 7zip library to compare pairs of files (something like LZW/gzip will not do due to having too small a context though using a larger dictionary cancels this). I then wrote an implementation of the nearest-neighbor chain algorithm for clustering (in F#, no errors, it just worked on first go! Also, in retrospect I see this is easily adaptable to huffman coding).

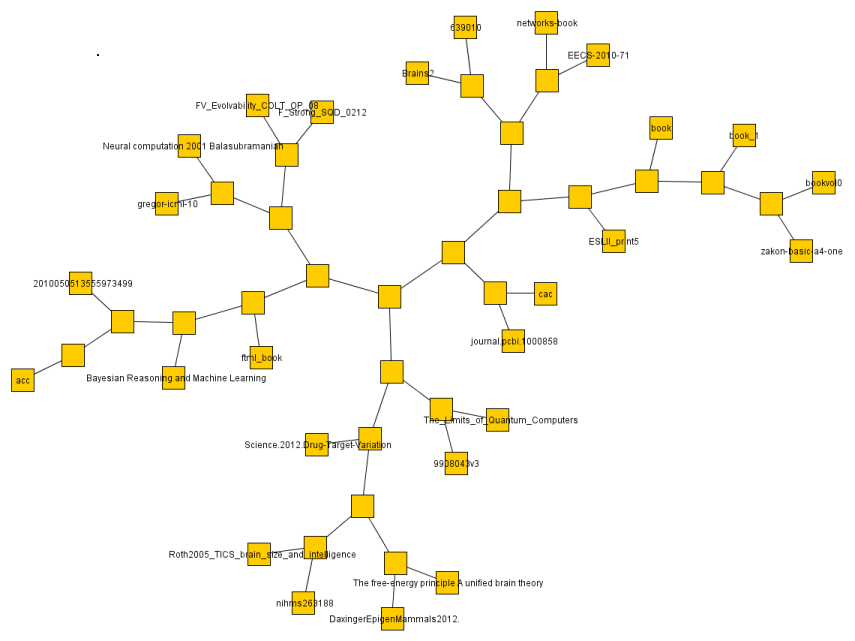

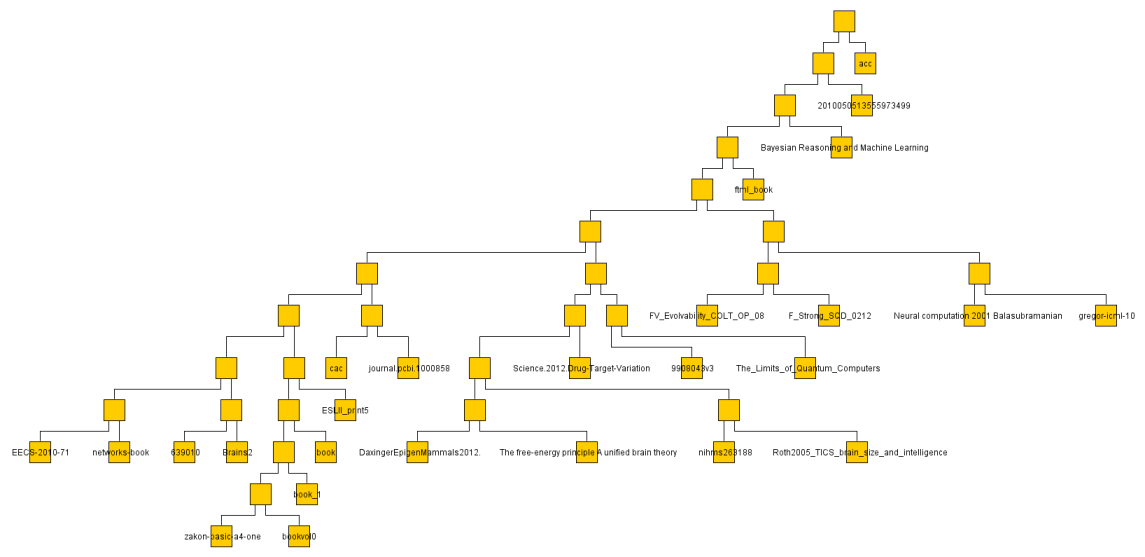

Of course I don't have the time or bandwidth to compare whole genomes so settled for a couple chromosomes across a a handful of monkeys/apes (gorilla, chimp, baboon, rhesus monkey, macaque and human). You can see the resulting graph below even though looking at a single chromosome or two is not very representative. It ran through the O(N^2) algorithm on the ~ 1GB data set in a few minutes on my machine.

I did the same with some 30 or so text documents (much smaller so faster) and got a result much more easily judged.

Marcus Hutter has an excellent argument for why being able to compress English text at its expected entropy rate ~ 1 bit per character would necessarily signify an AI. In fact top entries in his competition make use of Neural Networks in their compression algorithm and take nearly 10 hours to compress 1GB, blurring the illusory boundaries between Machine Learning and compression. Alas, while impressive they are still mostly limited to the word level. To get better concepts will need to be incorporated.

You can look at it this way. Near perfect compression implies a couple of things. It implies the ability to understand ambiguous contexts, non-specific references and vague language. Such an algorithm would require sophisticated knowledge of not just grammar but knowledge and culture, hence it could read and write English as well as any human.

Consider a simple example of compressing a series of digits such as 14159265... This is at first incompressible but if you recognized it as the decimal portion of pi you could simply represent it symbolically. Of course utilizing all of human knowledge as the model makes a mere look up table and is counter to Occam's Razor. It would be intelligence through brute force stupidity which is not even computationally tractable - hence the need for powerful but smaller models. The more intelligent the model the more it minimizes the data's minimum description length: Length(Model) - log2(probability of data Given Model). We prefer the smaller model not because it is necessarily better but because all things being equal it's much less likely to break way into the future.

I hope after reading this you now have an appreciation for the intelligence behind 7zip (the best open source compression library btw) and how learning, compression, coding and prediction are all related.

The below are the clusters created from the NCDs.

Below is the code. It was not written with optimality in mind although it is still fairly performant (I like that word so nya).

lit.er.al.ly adv.

Usage Problem

Usage Note: For more than a hundred years, critics have remarked on the incoherency of using literally in a way that suggests the exact opposite of its primary sense of "in a manner that accords with the literal sense of the words." In 1926, for example, H.W. Fowler cited the example "The 300,000 Unionists ... will be literally thrown to the wolves." The practice does not stem from a change in the meaning of literally itselfif it did, the word would long since have come to mean "virtually" or "figuratively"but from a natural tendency to use the word as a general intensive, as in They had literally no help from the government on the project, where no contrast with the figurative sense of the words is intended.